About

YVIC Research studies compact language models and how they behave under limited capacity. The work focuses on representation geometry, controllable behavior, and efficient language systems that run under real-world deployment constraints.

Research interests

- Compact language models and semantic structure

- Representation-level analysis of prompt and prefix control

- Generalization after pretraining

- On-device language systems

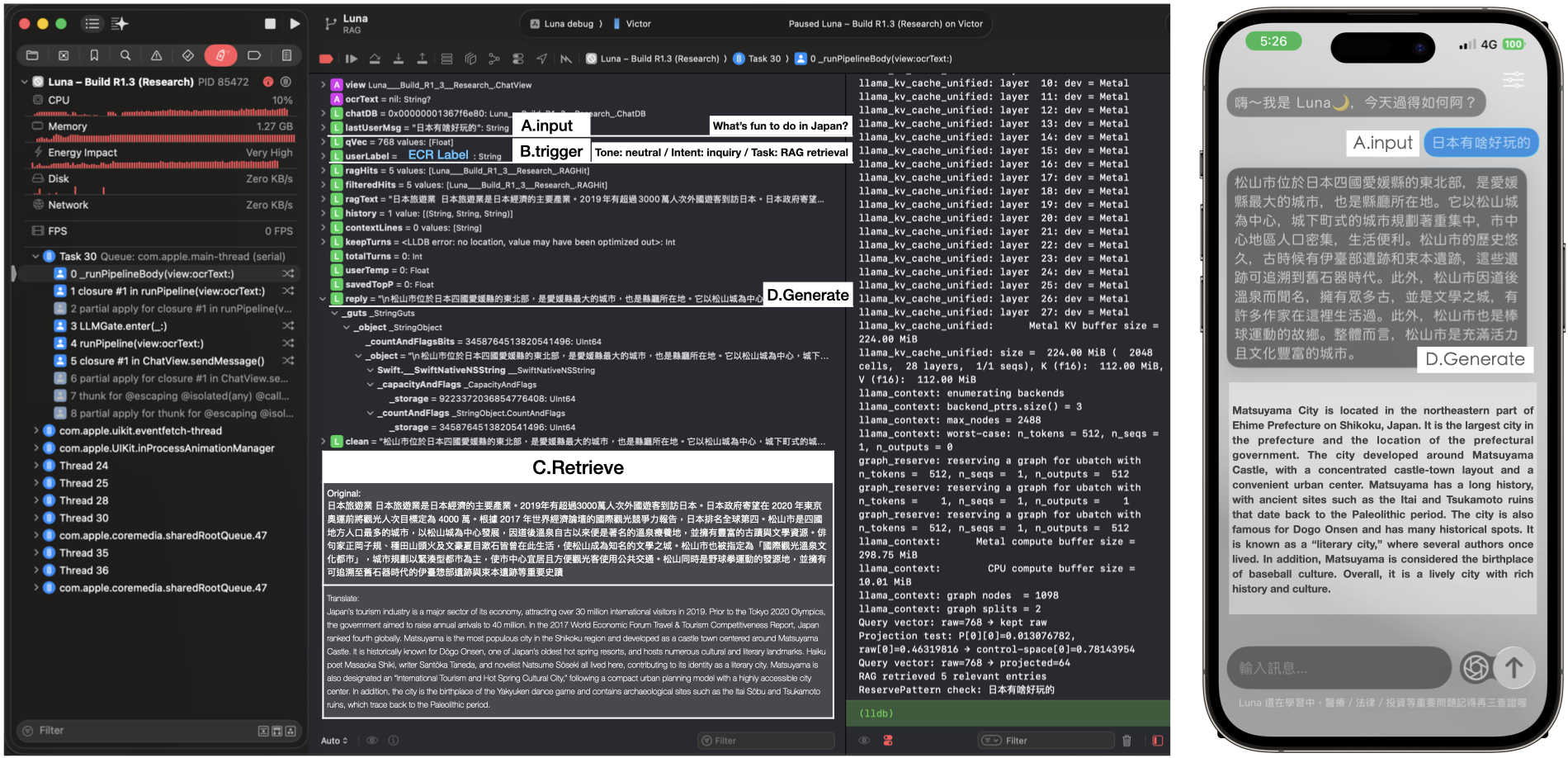

Luna (on-device system)

Luna is an offline language system built for studying compact models in practical settings. It is used to test local inference, retrieval, and on-device interaction on consumer hardware.

Luna is maintained as a research system for local and private language model deployment.